Professor Stephen Hawking provoked considerable debate recently by suggesting that we could have more to fear from the nature of capitalism in future than armies of intelligent robots. The response was immediate, robust, deeply personal and entirely predictable.

The basic premise of the discussion was Hawking noting that, if most of the work of a future society was performed by machines, then how we occupied ourselves instead was much more of a social, political, economic, ethical, demographic, etc. question than it was technological. The rebuttal was essentially:

- That’s silly: the old jobs will be replaced by new ones,

- Please don’t say nasty things about capitalism,

- Scientists should stick to science.

So how much of this criticism was justified and how much of it was simply The Establishment closing ranks?

Well, it could certainly be argued that Hawking may not have got his point across well on this occasion. He’s believed to have made similar observations in previous, not-quite-so-global environments, both in more detail and with greater clarity. Perhaps this was his fault, perhaps it was the fragmented, social media, nature of the Reddit session; but the comment was easily picked up in isolation and trivialised, then reported as superficial and misrepresented as ‘Those nasty capitalists are going to replace us all with robots’. It’s much easier to find a counter-argument once you’ve repackaged the original argument in a form that suits you.

Without wishing to put words into Hawking’s mouth, there are probably two key observations behind what was essentially a small soundbite:

- The automated world we’re about to enter will be very different to the present one; the traditional model of the changing workplace may not apply. If the robots take the old jobs, they may take the new ones too.

- The numbers, the scale of all this, will be unprecedented, as may be the wider social upheaval. Alternatively, the existing economic frameworks might not change at all – which could be even worse for most people.

In both respects, people aren’t using the term singularity lightly.

The platitudes regarding the first point generally take the form of claiming that this is apparently nothing new. True enough, we’ve had increasing automation in one form or another for centuries. The essential argument is that relieving humans of the mundane work, leaves them free to be more creative and find more interesting things to do. Eventually, this widening of horizons leads to both further technological advances and new jobs in these new fields. One day, technology advances to the point where these jobs themselves become automated, people go off and do something else again, and the process repeats forever …

But it can’t repeat forever. That’s not what The Singularity is about. We’re looking ahead to a world in which machines/AI/robots – call them what you will – are better than us at everything. Faster, stronger, more accurate, longer-lasting and, from a conventional economic standpoint, cheaper. They’ll be better at both the existing jobs for which they replace us and the new ones that arise as a result and this is the repeated pattern that we should look to – one in which humans play no part at all. Why would anyone use a human for anything if a robot can do it better? Well, there may be an answer to that but it brings us to the second point …

In today’s world already, in fact, a number of people don’t work – or do very little. But, as a non-worker, how society treats you depends largely on who your parents are. It could be argued that, on the whole, current unemployment figures don’t include people who don’t need to work. However, whichever way you do the calculations, unemployment is generally fairly low. Most people work; and most of them work in difficult conditions, for too long, for low pay, largely for the benefit of either the more fortunate non-workers or much better-off workers. The ever-present threat is that this mundane existence is better than the alternative: that of becoming part of the less fortunate non-working community. These non-workers are generally despised compared with their affluent counterparts. In fact, non-workers make up the two extremes of the social spectrum.

Now, project this model forward into a future in which the majority of people don’t work. Say, for the sake of argument, that unemployment rates of 10% become more like 90%. The economists will howl at these figures but the roles are reversed now: it’s the economists that don’t understand the significance of the technological singularity.

With existing economics, can the majority of the population be supported to do nothing? (Or meditate or write poetry or play sport or something – although there’s a possibility the machines might be better at that too.) No, of course not. Because everything in the world today revolves around the competition to make profit. Nothing much happens if there’s nothing in it for someone. It’s anyone’s guess what might happen to a majority superfluous workforce. The only non-workers that will get by, just as now, will be those few that don’t need to work. That simply cannot be a stable system. There’s nothing essentially different to today in terms of the definitions of ‘haves’ and ‘have-nots’ but the balance will shift hugely in a numerical sense – probably well beyond the catastrophe point … another singularity or revolution to use different terminology.

Now, if we’re going to change any of this, there’s some considerable thinking-outside-the-box needed here. But when scientists, who are generally pretty good at that sort of thing, dare to try, it seems that they get slapped down by economists who are all-too-ready to point out that they might not understand the niceties of current economic models. No, they probably don’t. No, they’re not trying to. They can see that something much bigger is about to happen and the response can’t be conventional. But when a physicist starts to talk about AI and unemployment and politics and economics, taking the piss is very easy indeed – particularly if you’re coming at it from being a beneficiary of the current system, and desperately not wanting it to change. But, it’s going to have to change and to start that process involves throwing out a lot of old, comfortable assumptions about the way the world works.

Just how hard this thought revolution can be in practice, might be best illustrated by an example; sort of fictitious but not hard to associate to the real world …

Around the turn of the millennium, there was a British car manufacturer with a strongly unionised workforce. Rampant anarcho-syndicalism it wasn’t, but the workers did have a little more power and more say in what the company did than many elsewhere. Slowly they were able to improve their working conditions. The result was that the owners had to make more concessions to the workers, which meant less profit. Both factors led to a drop in quality in cars rolling off the production line and unrealistic prices compared to their competitors. Eventually, the company went bust. The result still stands as a case study in how not to do business. In fact, it’s often noted that, by the end, ‘the workers thought the company was there to give them work, rather than make cars’.

But … can we just for a moment entertain the idea that this might be a good thing? Why shouldn’t we have structures that put people before profits? If we’re not competing successfully against slave (sometimes even child) labour in other parts of the world, where really is the flaw in the system? Here or there? In a capitalist system, nothing happens unless there’s a profit in it for someone; that’s what drives the system – the whole world. Is it really impossible to reverse the logic? In an economic system that looked after people first, would we care that much if the cars weren’t much good? Well, the elite non-workers might but few others would if they were properly fed and living in peace. The elite would bang on about personal freedom – the rest of us would ignore them.

To put it another way, a good sub-system, failing within a bad global system, isn’t a bad sub-system: it points the way to a better global system.

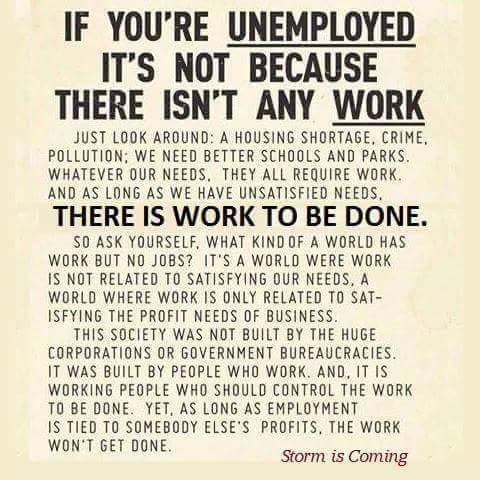

In fact, there is work to be done, whether it be by humans or robots, but it’s not being done at present because it isn’t profitable. Our hospitals and local amenities are falling down but they’re not being rebuilt because the economics aren’t worthwhile. People are starving when there’s food to feed them and dying when we have the medical knowhow to treat them but neither is happening because it doesn’t pay. It can’t be denied: profit comes before people in the world today. How can it even be moral to talk about the cost of a drug that will keep someone alive? Particularly when that cost can be considerably less than the elite throw away on a whim. It can’t. It isn’t ethical or moral: it’s economic, laced with politics. It’s capitalism.

(Of course, the principle doesn’t just apply to emerging technology. If the AI singularity doesn’t get us first, it will be something else – perhaps environmental oblivion? Capitalism can’t and won’t save the planet because no-one will profit from it and people are a secondary consideration.)

So, to return to the original 1, 2, 3 criticism of Hawking:

1. The old jobs will be replaced by new ones. No, not this time.

2. Don’t say nasty things about capitalism. Sorry, we have to: it’s going to be the death of us.

3. Scientists should stick to science. Well yes, there is some sense in this and, in fact, we’ve made the point before. But there’s nothing worse about a scientist making social comment than an economist doing the same. The economist is simply blinkered by the belief that our society and economy are the same thing, always will be and always have to be. Escape the notion of profit being the first and last word in everything and an economist is just an expert in playing Monopoly. They have no more insight into social structures (including possibly those with robots) than a scientist, or a poet, or a footballer.

But Hawking is right. Technology does have the potential to give us all a wonderful future. But it won’t; not unless we’re prepared to change the framework we’re going to place it in. If we don’t, it will make things worse.

So, ‘Will the robots take our jobs?’ isn’t the important question. (Yes, they will!) We should be asking ‘What’s the work that really needs to be done?’, ‘For whose benefit?’ and ‘What will we be doing while they’re doing it?’

January 6th, 2016 at 1:10 pm

[…] course, there’s probably a parallel observation to be made about any focused specialist in a particular field (economists, lawyers, politicians, etc.) but the observation doesn’t […]

September 15th, 2016 at 11:16 am

[…] in truth, we’ve already used it a few times before in this blog but perhaps now might be a good time to have a closer look at what it is and what it might […]

January 6th, 2017 at 12:54 pm

[…] changed politically or economically: where ‘real’ things only ever happen for profit, we still won’t be building hospitals, or schools; or feeding the starving, or curing diseases. We might just be buying and selling bucketfuls of useless data to each […]

March 1st, 2017 at 1:14 pm

[…] Silly? Maybe. But that’s exactly what the economists and the right-wing press did to Professor Stephen Hawki…. […]

September 8th, 2018 at 4:42 pm

We may end up in the position indicated in the movie Braveheart where Edward says (I think) “Send in the Irish, they are cheaper than arrows.”

A high tech robot may be quite expensive to purchase, maintain, and operate. Do we really want one to sweep our floors? No, send a human, they are cheaper!

This is the thinking currently and I see no movement to change it amongst the plutocrats. (If profits are your guide, they will not lead anywhere anyone wants to go.)

September 9th, 2018 at 12:10 am

This is a great article

October 1st, 2018 at 12:13 pm

[…] ‘Will the Robots Take Our Jobs?’ Isn’t Really the Important Question […]

September 16th, 2020 at 6:47 pm

[…] Remember how Hawking, just a few years before he died, was utterly vilified by the economic smartarses? Well, we’ve got a few more years under the belt now to see how that’s turning out. We’re beginning to see even more clearly how unprepared capitalism is for climate change and embryonic automation, for example, and how’s it’s been unable to put people before profit in the current Covid-19 pandemic. The merest glimmer at the end of the lockdown tunnel and the airports open again, for example. If the virus was (you choose) God’s/nature’s way of giving us a warning, we’ve clearly not taken it. It’s looking more and more as if economists telling scientists they’re unrealistic about technological fallout is like computer gamers telling real soldiers they don’t know anything about war. (Let’s face it, some people are better than others at playing Monopoly but, when there’s a real problem outside, sane people stop playing the game and go and deal with it.) […]